TECHNICAL ADVISORS’ COLUMN: Tony McGrail

There’s a 70% chance of your transformer failing in the next year.

That’s a prediction (or forecast) which can’t be wrong!

If we say there’s a 70% chance of failure as an outcome and the transformer fails, then the outcome falls in the 70%, and if it doesn’t fail the outcome falls in the 30%. We’re covered come what may. The only ways to get this type of prediction wrong are to say something will definitely 100% happen, and it doesn’t, or say that something definitely 100% will NOT happen, but it does [1]. But avoid the two definite statements, and any other version of the prediction cannot be wrong.

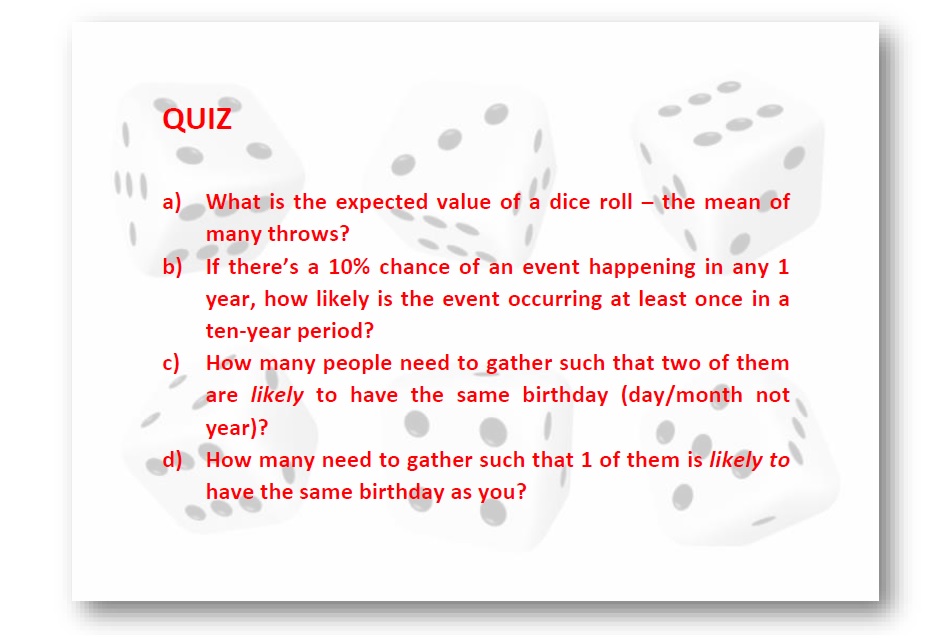

How can we check the accuracy of the prediction if the event only happens once? It’s not like repeated rolls of a dice, which we can predict with some statistical accuracy.

If I roll a standard, fair, 6-sided dice, the chances of rolling a 4 are 1 in 6 (16.67%). If I predict I’ll get a 4, say, 50% of the time, that’s likely a poor prediction – which we can check through repeated rolls. We can roll the dice many times, but we can’t rerun the year many times. What we can do is look at similar situations, in this case other transformers, and see how well our predictions of failure probability stand up in each case: compare the estimated probability of failure with the actual outcome at the end of the year for each transformer. If I estimate a 5% chance of failure for a particular transformer and it does fail, I’m out by 95%. But how do we rate the overall prediction accuracy across the population? We can look to weather forecasters!

Some years ago, the weather forecasting folks put together a means to measure the accuracy of predictions or forecasts, called a Brier Score [2]. The score tells you how well your predicted forecast for rain, or failure, or whatever, across a number of locations and times relates to what actually happened. It does this through a mean square error, with the lower the Brier score the better the set of forecast [2]. The same approach would apply to transformer failure probabilities and can be used to check the accuracy of the forecast; and is something we’re working on at present.

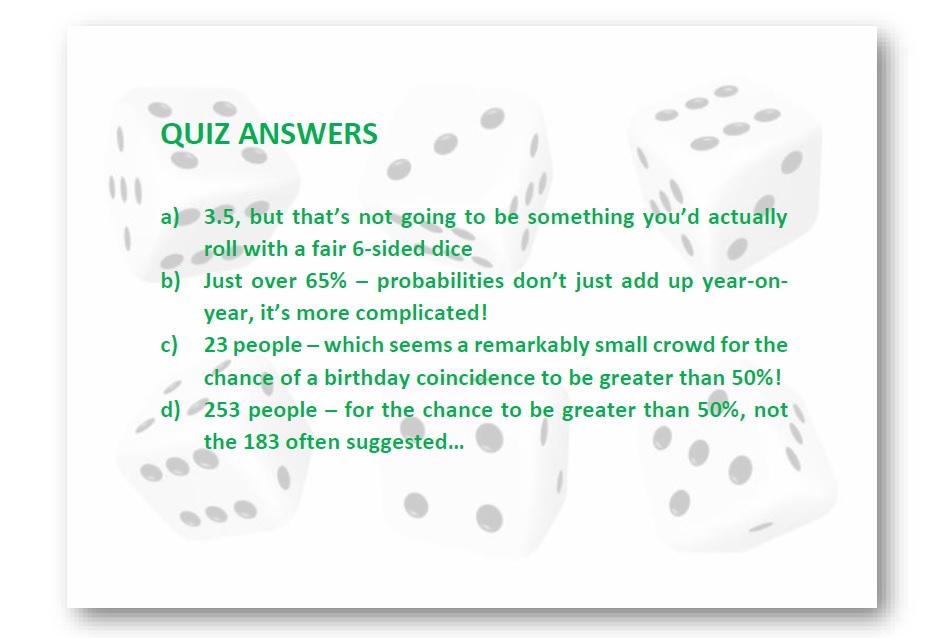

If you have an interest in this topic, please contact the author! When working with probabilities I recommend checking results with an expert as things can get complicated and can sometimes be counter-intuitive [3]. Some quiz questions may help illustrate.

References

-

Barbara Mellers in “Rational Soothsaying”, https://www.bbc.co.uk/programmes/m00132v9

-

https://en.wikipedia.org/wiki/Brier_score

-

How Juries are Fooled by Statistics, Peter Donnelly, TED Talk, 2007

Acknowledgement

Thanks to Rhonda, Richard and Vanessa for their suggestions.